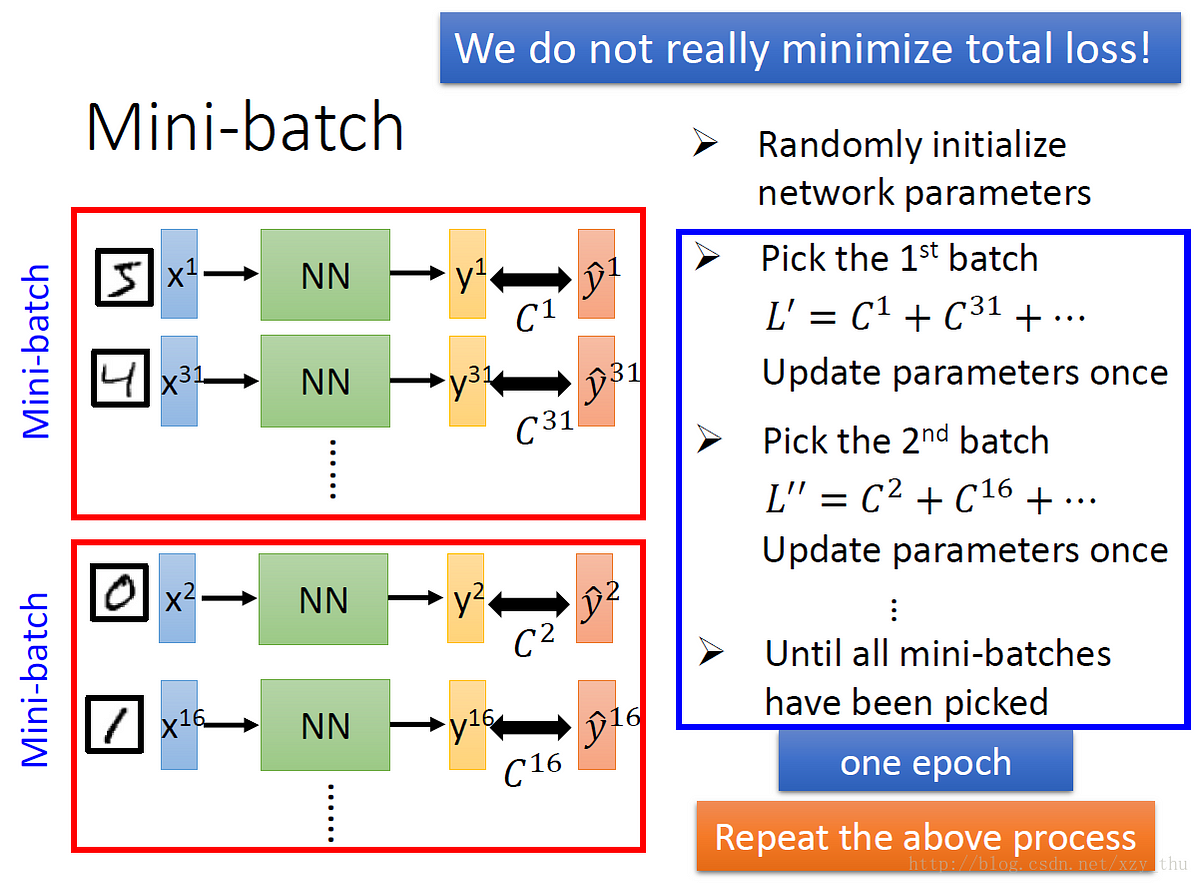

reduces the variance of the parameter updates, which can lead to more stable convergence.Common mini-batch sizes range between 50 and 256, but can vary for different applications.

In mini batch algorithm rather than using the complete data set, in every iteration we use a set of ‘m’ training examples called batch to compute the gradient of the cost function. Mini batch algorithm is the most favorable and widely used algorithm that makes precise and faster results using a batch of ‘ m ‘ training examples. How does mini batch gradient descent work? SGD Never actually converges like batch gradient descent does,but ends up wandering around some region close to the global minimum.

#BATCH GRADIENT DESCENT UPDATE#

Taking second step: pick second training example and update the parameter using this example, and so on for ‘ m ‘.Taking first step: pick first training example and update the parameter using this example, then for second example and so on.

#BATCH GRADIENT DESCENT CODE#

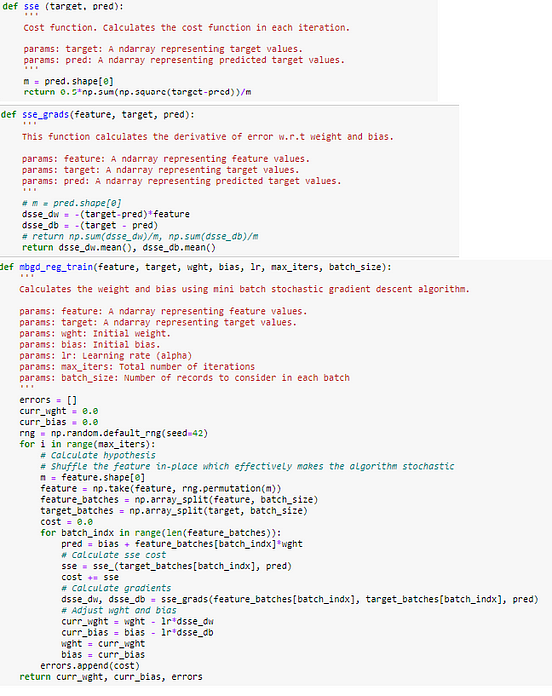

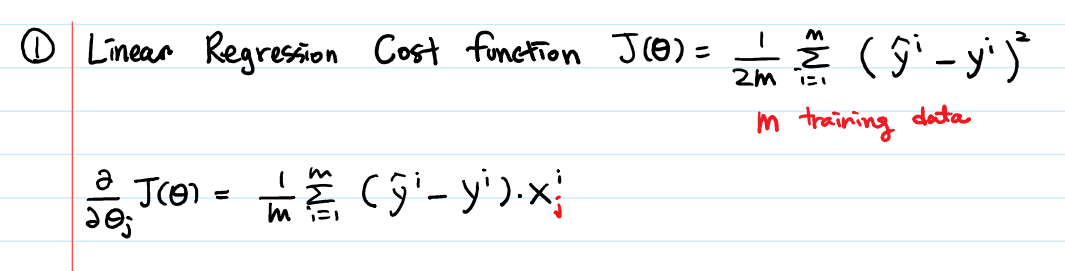

Where, ‘m’ is the number of training examplesįollowing is the pseudo code for stochastic gradient descent: In SGD, one might not achieve accuracy, but the computation of results are faster.Īfter initializing the parameter with arbitrary values we calculate gradient of cost function using following relation: As it uses one training example in every iteration this algo is faster for larger data set. Then, for updation of every parameter we use only one training example in every iteration to compute the gradient of cost function. The first step of algorithm is to randomize the whole training set. So, for faster computation, we prefer to use stochastic gradient descent. How does stochastic gradient descent works?īatch Gradient Descent turns out to be a slower algorithm. The code below explains implementing gradient descent in python.

Where \(\theta_0,\theta_1,\ldots, \theta_n\) are parameters and \(X_1,\ldots, X_n\) are input features. In linear regression we have a hypothesis function:\(H(X)=\theta_0+\theta_1X_1+\ldots +\theta_nX_n\) We will consider linear regression for algorithmic example in this article while talking about gradient descent, although the ideas apply to other algorithms too, such as In this algorithm, we calculate the derivative of cost function in every iteration and update the values of parameters simultaneously using the formula: In gradient descent, our first step is to initialize the parameters by some value and keep changing these values till we reach the global minimum. We use gradient descent to minimize the functions like J(?). Here,the algorithm to achieve objective goal of picture below is in this tutorial below. This algorithm is widely used in machine learning for minimization of functions. The categorization of GD algorithm is for accuracy and time consuming factors that are discussed below in detail. Gradient Descent Algorithm is an iterative algorithm to find a Global Minimum of an objective function (cost function) J(?).